Learning AI/ML: The Hard Way

A learning guide on machine learning for beginners.

Join the DZone community and get the full member experience.

Join For FreeThe Wave and the Curve

Data science, Artificial Intelligence (AI), and Machine Learning (ML), since last five to six years these phrases have made their places in Gartner’s hype cycle curve. Gradually they have crossed the peak and moving toward the plateau. The curve also has few related terms such as Deep Neural Network, Cognitive AutoML etc. This shows that, there is an emerging technology trend around AI/ML which is going to prevail over the software industry during the coming years. Few of their predecessors such as Business Intelligence, Data Mining and Data Warehousing were there even before these years.

Finding the Crystal Ball in the Jungle

Prediction and forecasting being my favorite topics, I started finding a way to get into this world of data and algorithms back in early 2019. Another driving force for me to learn AI/ML was my fascination on neural networks that was haunting me since I started learning about computer science. I collected few books, learned some python skills to dive into the crystal ball.

While I was going through the online articles, videos and books, I discovered lots of readily available tools, libraries and APIs for AI/ML. It was like someone who is trying to learn cycling and given a car to drive. Due to my interest in neural networks, I got attracted to most the most interesting sub-set of AI/ML, Deep Learning, which deals with deep neural networks. I couldn’t stop myself from directly jumping into Google Tensorflow (a free Google ML tool) and got overwhelmed by a huge collection of its APIs. I could follow the documentation, write code and even made it work. But there was a problem, I was unable understand why I am doing what I am doing. I was completely drowning with the terms like bios, variance, parameters, feature selection, feature scaling, drop out etc. That’s when I took a break, rewind and learn about the internals of AI/ML rather than just using the APIs and Libs blindly. So, I took the hard way.

On one side, I was allured by the readily available smart AI/ML tools and on the other side, my fascination on neural networks was attracting me to learn it from scratch. Meanwhile, I have spent around a month or two just looking for a path to enter the subject. A huge pool of internet resources made me thoroughly confused in identifying the doorway to the heart of puzzle. I realized, why it is a hard nut for people to learn. Janakiram MSV pointed out the reasons correctly in his article.

However, some were very useful, such as an Introduction to Machine Learning by Prof. Grimson from MIT OpenCourseWare. Though its little long but helpful.

The Four Pillars of Learning AI

Gradually realized about the four pillars for learning AI/ML.

- Data: Artificial Intelligence has always been a part of Computer Science studies. But due to unavailability of data to train the models, people kept it aside. However, during the last 5 to 6 years many of the organizations including the government organizations have shared their data for artificial intelligence and machine learning experiments. Here are few of them.

1) Kaggle Datasets

2) Microsoft Research Open Data

3) U.S. Government’s Open Data

4) European Data Portal

5) UK Open Data

6) FiveThirtyEight

7) Socrata OpenData

8) AWS Public Data sets

9) UCI Machine Learning Repository

10) Quandl

11) The World Bank

12) /r/datasets

13) Google Dataset Search

14) Open Government Data Platform India

15) Wolfram Data Repository

16) Awesome Public Datasets

- Mathematics and Algorithm: My journey to the root of artificial intelligence dragged me to some of the school materials on Matrix, Linear Algebra, Numerical Analysis, Calculus and Statistics etc. There are few areas of mathematics which come very handy in understanding the algorithms, fine tune them or modify them if required. They are as below.

There is a sea of machine learning algorithms. Popular ones can be studied at

For those who want to go back to the basics on algorithm can find the ones below.

- Algorithms Specialization by Prof. Tim Roughgarden: Part 1

- Algorithms Specialization by Prof. Tim Roughgarden: Part 2

- Coding Platform: AI/ML codes usually require a considerable memory size and CPU speed, arranging similar resources might not be possible for all on the personal computers. However, there are some companies offering their online coding platform supported by resources at cheap price or no cost at all e.g.

- Education: Though there are numerous courses available now-a-days, including the ones offered by renowned universities like

Apart from the above many are offered by online education organizations such as,

- Deep Learning Specialization by Prof. Andrew Ng

- Several real college courses from Harvard, MIT, and more of the world's leading universities are offered as a collection of Machine learning courses by EDX

- Intro to Artificial Intelligence by Udacity

- PG Diploma in Data Science by UpGrad with certification from IIIT Bangalore

The Route Map Toward AI

After spending a long period of two to three months just searching for the right path to the crystal ball, I could make up a map between the core terminologies, such as Artificial Intelligence (AI), Machine Learning (ML), Data Science (DS), Deep Learning (DL) etc. I found a quick note on these terms.

"In a layman’s language, when we have a target system (not a software system) or environment e.g. a community of people, weather, health, customers, citizens, businesses, animals anything that we want to monitor, serve, influence or control (as a personal interest, national interest or business interest), we collect (or keep on collecting) data to capture events, facts and figures about them on a regular basis and store in a location which eventually require a large volume or space (Data lake, Data warehouse or Big data). Once the data is collected, some tools are applied to read or learn the data (the process of data mining, machine learning or deep learning etc.) and find specific facts, figures, trend or patterns (machine learning models) which the business (nation, person or group etc.) is interested in by applying some algorithms (machine learning algorithms). Once the learning and fact-finding are over and the machine learning models are generated, the models are used to predict the results of the events (prediction process). Further actions are decided based on those predictions. The actions can be performed to control the target (system or environment) using a software (e.g. automated notifications, security checks etc.), a device (e.g. IoT or Robotics) a person (e.g. sales promotions or offers to customers). The entire process which begins with data collection and ends with the decided actions can be termed as Artificial Intelligence. However, John McCarthy, an American computer scientist, back in 1956 coined the term AI as “The science and engineering of making intelligent machines, especially intelligent computer programs”. Data science on the other hand has all most everything related with data, which means it has cross-cutting areas with AI, ML or DL. Data science addresses all those concerns of how and what all data should be collected, stored, read, transferred and processed. The class of actions which are applied to automate repetitive and routine tasks involved in producing and delivering products and services are part of automation. Apart from the decisions and actions derived from the knowledge of machine learning or deep learning, Automation has several other areas such as robotics, mechanical and electrical devices etc."

Speeding Up

Now that I have the map, I accelerated with a target in my mind – building my own neural network.

On my way, I found some cool videos which are really refreshing and ease the effort of biting on the hard nut ��. Few aggressive curriculums to learn AI, ML and DS faster for the ones who are really in hurry are described in those videos. Such as

- Learn Machine Learning in 3 Months (with curriculum)

- Learn Deep Learning in 6 Weeks

- Learn Data Science in 3 Months

Based on the conceptual map which I could make out from my studies, a curriculum can be derived with fourteen primary milestones toward the crystal ball. The time required to complete the entire journey (except the last milestone i.e. Continuous Practice) which I could estimate comes to approximately a year for someone starting a fresh. People having prior skills on mathematics and coding can surely finish it early.

Among the fourteen milestones, four belong to mathematics, three belong to purely coding, seven belong to studying courses with coding practice and finally the last one is the continuous practice, without which the world of data and algorithm might get lost soon from the practitioners. For each of the milestones we can take separate online formal trainings. However, it seemed easier for me go through the videos and practice based on my own pace and availability of time.

Milestone 1: Linear Algebra

The Linear Algebra course videos offered by Khan Academy touches over all topics with short videos. While the Linear Algebra course from MIT by Prof. Gilbert Strang takes us to more detailed part with comparatively longer videos and explanations.

Milestone 2: Statistics:

The course on statistics offered by the Khan Academy helps a lot with concise video lectures. There is another course on statistics fundamentals by MIT as well.

Milestone 3: Probability Theory:

I liked the little fun filled approach presented by Siraj Raval toward the probability theory. There a detailed course on probability theory by Harvard University also available on YouTube.

Milestone 4: Calculus:

Calculus is another subject of mathematics which helps in understanding the neural networks, specially the gradient descent algorithm used to minimize errors in prediction. There is a nice and concise series of video lectures from MIT on multivariate calculus. There a quick one from the Imperial College London as well.

Milestone 5: Numerical Computation

There is concise lecture on numerical computation for Ian Goodfellow, one of the co-authors of the Deep Learning Book can be found on YouTube.

Apart from the ones listed above, there is a concise course offered by the MIT on mathematics focused on machine learning called Mathematics of Machine Learning. This course covers most of the important mathematical concepts of machine learning. If the above list of mathematics resources push you in the jungle of beasts, here is a video that inspires a lot.

Milestone 6: Python

Though the R language was built for statistical computing and graphical representation of data, it lacks other features which are expected from a full-fledged multi-purpose programming language and that’s why the Python language took over the competition. Moreover, Python offers a sea of pre-built libraries for machine learning and data processing, which helped it to become the most popular programming language option for machine learning and AI. However, learning R helps a lot in understanding the mathematical and statistical concepts. Especially when a quick prototype or validation of a machine learning algorithm is needed, R comes very handy. Learning R in parallel with Python is the best idea. Finally, Julia language is the new kid in the block which has stronger feature over Python and it is a potential challenger for Python.

Kaggle offers a course for learning Python with a focus on machine learning. Few Python packages are the basic ones to start with, such as

- Numpy: This is used for numerical computations

- Pandas: This is used for data handling, manipulation and processing

- Matplotlib: This is a library for data visualization

- Scikit-Learn: This is a library which provides a range of Supervised and Unsupervised Learning Algorithms, mainly focused on model building. Also known as Sklearn. In Supervised Learning, the model is trained with a labeled (where input and output both are known) dataset. While Unsupervised learning relies on training the model with unlabeled dataset (where input is known but output is not known).

- NLTK: It is a popular library to work with human language data and used highly in Natural Language Processing for classification, tokenization, stemming, tagging, parsing, and semantic reasoning

- Seaborn: It is an advanced library for graphical representation of data

Milestone 7: Algorithm with Python

At this point some hands-on practical experiences with machine learning algorithms using Python’s Scikit-Learn library is very helpful to have quick check on the skills acquired so far and gain confidence for next steps.

Milestone 8: Machine Learning Course

Once we are confident with our programming skills and application of mathematical knowledge in machine learning, a formal course on Machine learning helps in putting the pieces together in a sequence. Prof. Andrew Ng being a pioneer in Artificial Intelligence walks us through the concepts of machine learning and their applications in the real world in his popular machine learning course. This course offers a free certification as well. The videos from this course are also available on YouTube. Since this is a concept oriented course, the GNU Octave language has been used to demonstrate the ML concepts during the course by building some prototypes with Octave. Octave is compatible with Matlab (a multi-paradigm numerical computing environment and proprietary programming language developed by MathWorks).

For further reference, Google has offered a crash course on machine learning. Google’s AI Education website provides the latest updates on Google’s AI progress with lots of resources. Kaggle also offers few micro-courses on data science for a quick start.

Milestone 9: Regression With Python

Regression and Classification are the two major types of machine learning applications. Regression helps in predicting the best possible output for a given data sample while classification segregates or groups the data in given categories. After going through a machine learning course and understanding the concepts, we should try making our own Regression programs in python. Both with a single variable and multiple variables using Gradient Descent algorithm (to minimize error or cost).

Prof. Andrew Ng covers the topic of Linear Regression with a single variable in the videos below.

- Lecture 2.5 — Linear Regression with One Variable | Gradient Descent

- Lecture 2.6 — Linear Regression with One Variable | Gradient Descent Intuition

- Lecture 2.7 — Linear Regression with One Variable | Gradient Descent for Linear Regression

Linear Regression with multiple variables is described in the sessions below.

- Lecture 4.1 — Linear Regression with Multiple Variables - (Multiple Features)

- Lecture 4.2 — Linear Regression with Multiple Variables -- (Gradient Descent For Multiple Variables)

- Lecture 4.3 — Linear Regression with Multiple Variables | Gradient In Practical Feature Scaling

- Lecture 4.5 — Linear Regression with Multiple Variables | Features and Polynomial Regression

- Lecture 4.6 — Linear Regression with Multiple Variables | Normal Equation

Milestone 10: Classification With Python

After the regression is done, we should try making our own classification programs in python. Prof. Andrew Ng covered them in his course during these sessions below.

- Lecture 6.5 — Logistic Regression | Simplified Cost Function and Gradient Descent

- Lecture 6.6 — Logistic Regression | Advanced Optimization

- Lecture 6.7 — Logistic Regression | MultiClass Classification OneVsAll

- Lecture 7.4 — Regularization | Regularized Logistic Regression

Milestone 11: Data Visualization

Without visualizing the data and analyzing its patterns, selection of algorithms for machine learning become nearly impossible. Machine learning professionals must know the techniques of data visualization. Here is a quick look at the need and purpose for data visualization.

Kaggle’s micro-courses on data visualization are targeted for ML learners and also from a non-coder to coder perspective. IBM has also offered a course on data visualization through the Coursera platform.

Milestone 12: Deep Learning and Neural Networks

At this point I am at the door of my most favorite subject – Neural Network. Though Python’s Scikit-Learn has a neural network sub-package (i.e. sklearn.neural_network), but this one takes us deeper in the world of neural networks and the algorithms involved in them. The course series delivered by Prof. Andrew Ng on Deep Learning Specialization offered on Coursera is the most popular one among all the courses. This course is also available on deeplearning.ai website. The course has five modules in it. The videos from this course are available on YouTube as well.

- Neural Network & Deep Learning

- Improving Deep Neural Networks

- Structuring ML Projects

- Convolution Neural Network

- Recursive Neural Network

This is paid course with nominal charges. A certificate is provided on completion of this course.

Milestone 13: Deep Learning Frameworks

Among the other variety of machine learning techniques, Deep learning has already become the de-facto standard for most of the AI/ML applications. There are so many deep learning frameworks out there today e.g.

- TensorFlow by Google (most popular)

- Keras (a wrapper which uses TensorFlow as the backend)

- PyTorch by Facebook

- Apache MXNet by Amazon

- MATLAB by MathWorks

- Caffe by NVIDIA

- Chainer

- Deeplearning4j for Java based deep learning

- The Microsoft Cognitive Toolkit (CNTK)

- ONNX by Facebook and Microsoft

Here is a quick video to over the list. There are numerous videos and tutorials in these frameworks on YouTube but it’s a good option to learn them directly from the resources on their web sites. Here are few video resources on TensorFlow, PyTorch, MXNet, CNTK, Chainer, and Caffe. Skills on these tools can be real assets in today’s world for anyone interested in building career in machine learning and AI. With these skills it becomes easy to get the control of the crystal ball

AutoML (Milestone 14)

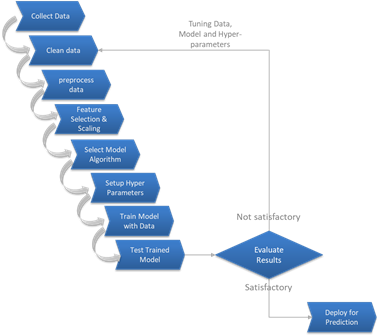

Using Machine Learning has its own pain areas such as cleaning the data, selecting relevant features, selecting the appropriate algorithm or model for a given dataset, selecting the correct configuration and tuning the hyper-parameters for the best results from that model. These steps can consume long hours since it involves lots of trials and guessing. A typical ML workflow is given below.

Typical ML Workflow

The purpose of automated ML (AutoML) is to automate these steps to arrive at the right configurations, so that, the best possible results can be achieved within shortest time. AutoML is here to help the Data Scientist to get rid of the repetitive tasks and concentrate on the data analysis and selecting the algorithm based on their business domain knowledge. There are plenty of AutoML tools and frameworks available these days such as, Auto-SKLearn, MLBox, TPOT, BigML, H2O, Auto-Keras, TransmogrifAI, DataRobot, FastAI, Google's AutoML, Amazon Sagemaker AutoPilot and many more.

Continuous Practice With AI

The most important part is to continue learning and practicing the AI / ML techniques, models, tools and frameworks and applying those in your applications for business and life. Since most of the AI / ML applications require large memory and processing resources, it might not be possible to try them out on our personal computers. However, there are few platforms which let us practice our ML skills on their platforms for free. Some of them also provide datasets and arranges contests to promote the effort. Here are few of them – Google Colab, Kaggle, MachineHack and OpenML.

Refreshing Energy Boosters

During the journey toward the crystal ball, when it feels too tiring, here are few quick booster videos and resources to refresh and re-motivate. There are many more on YouTube.

- Interviews with Heroes of Deep Learning

- How to prepare a dataset

- But what is a Neural Network?

- Solving A Kaggle Challenge

- How to Make an Amazing TensorFlow Chatbot Easily

- Image Classifier with TensorFlow

- Back propagation in Neural Network with an example

- Derivation: Error Backpropagation & Gradient Descent for Neural Networks

- Build an AI Startup

- AI in 2019

- Sentimental Analysis

- Natural Language Processing

- Making money with ML

- Predicting Stock Prices

- Best programming languages for ML

- TensorFlow Playground

- OpenAI: Discovering and enacting the path to safe artificial general intelligence

Opinions expressed by DZone contributors are their own.

Comments